My son was three months old when he spiked a fever, hovering right around 101. He'd had surgery as a newborn, and I was protective in a next-level kind of way.

I called the emergency pediatrician line, opened seven browser tabs, and read everything I could find. If ChatGPT had been available, you can bet I would have asked there too. The sources didn't agree. Some said go to the hospital immediately. Others said to wait it out, given his lack of other symptoms. I felt paralyzed.

Late-night doom Googling

We did the only thing we knew how to do - we went to the hospital. They immediately told us we shouldn't have come, that it was riskier for him to be there, and sent us home, tail between our legs.

A few months later, I founded Dewey Labs, an expert-first AI company. Which might seem like an odd response to a parenting crisis. But here's the thing:

I lie awake at night thinking about the world my kids will inherit.

Will they lose their ability to think for themselves? To tell real from fake? To find the signal in the noise?

And that's just what I can see coming. The speed of this technology makes it nearly impossible for any institution, no matter how well-meaning, to predict the future—much less to control it.

So why am I building AI?

When Scott and I decided to start a new company, we explored dozens of ideas around a few values we hold dear: increasing access, supporting creators, and helping humanity thrive. When ChatGPT hit the market, we took a pause. Did we want to build with this?

I didn't. I saw danger everywhere - in every confident wrong answer, in every piece of advice scraped from who-knows-where. I wanted the genie to go back in the bottle.

Scott saw something else. What if we could use this to connect people to knowledge they'd never otherwise access? What if, instead of breaking trust, we could build it?

Here's what he helped me understand: This technology isn't going away. I can step back and watch what gets built, or I can help shape it. For me, that's not really a choice at all.

I don't know how AI will shape our world. I believe it will do great good and great harm, the way countless technologies before it have. But how much good? That's within our control. That's shaped by what we build and how we build it.

You can't steer a ship you're not on.

So we got on board. Not with blind optimism, but with a clear first problem to solve.

We sat down to build for the users we know best: ourselves. What would have helped us that night in the ER?

We already knew who we trusted most for parenting questions: Emily Oster. Her books were on our shelves, her newsletter in our inbox. So we built a basic version of a safe, reliable, controlled "Emily Bot."

It was addictive. As soon as the prototype was working, we sent it to a few friends, who sent it to a few more. Every parent immediately felt the difference. When you ask about sleep training, you get Emily's nuanced, research-backed perspective, not a mashup of every parenting philosophy on Reddit. And when it didn't know something? It said so, a superpower most LLMs haven't learned. Plus direct citations to where the information was coming from. It was rough, but it felt like magic when the alternative was a late night panic Google.

We shared the early version with Emily, who was interested but clear: she'd only move forward if we could guarantee accuracy and complete transparency. No making things up. No compromising the trust she'd built with her community.

That became our north star.

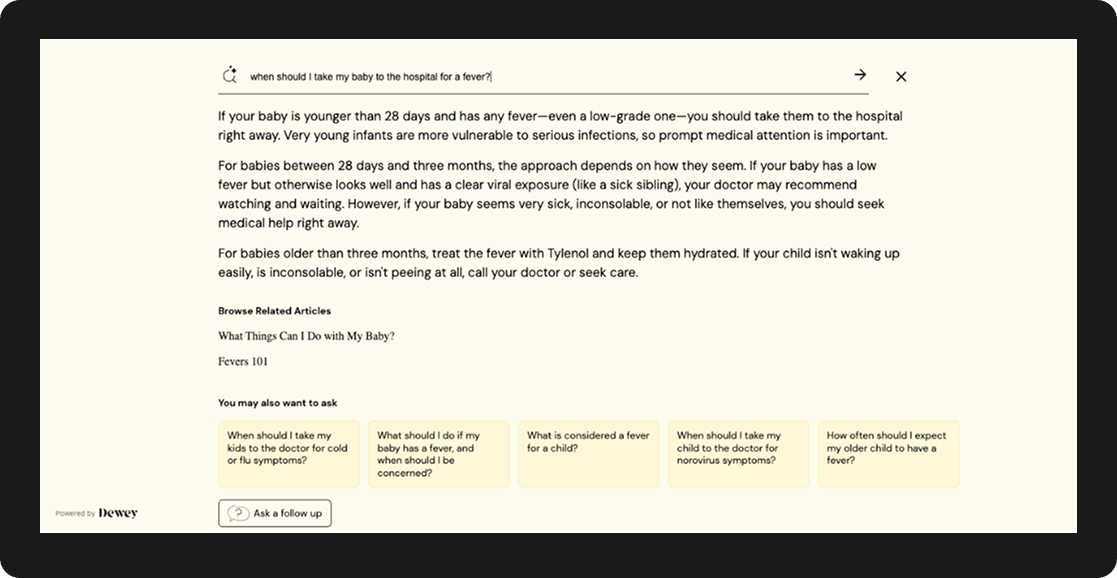

Today, when parents face that 3 AM fever, they don't have to choose between seven contradicting sources. They get Emily's perspective in seconds, fully cited, consistently accurate. Dewey connects thousands of people to expert knowledge every day. Not just from Emily, but from researchers, journalists, and specialists across fields. We're building the bridge between human expertise and the people who need it.

And that bridge matters more than ever. Because here's the paradox: as AI gets more powerful, human expertise becomes MORE valuable, not less.

Right now, we're facing a crisis many people didn't see coming. AI models are increasingly training on AI-generated content, essentially eating their own output. Researchers call it "model collapse," and it's already happening. The more AI slop floods the internet, the worse these models become at understanding reality. They start converging on bland, internet average answers that miss nuance entirely.

The solution isn't less AI. It's better source materials.

What we need is thoughtful, carefully researched, net new thinking. Human genius. The kind of knowledge that can only come from deep, sustained engagement with real problems: the pediatric nurses who founded Moms on Call after working with thousands of families, the careful consideration of data and tradeoffs that What Works for Health brings to public health decisions, the deeply personal perspective of Katy Clarke at Untold Italy where on-the-ground experience and earned expertise are inseparable.

We're not building clones. We're not trying to write your next book or replace teachers, doctors, or therapists. We're building tools that increase access to expert knowledge, so those experts can focus on what only they can do: creating the next insight, serving the next patient, pushing human understanding forward.

This is why we built Dewey. If you're an expert whose knowledge matters, we want to work with you. If you believe trust is the currency that matters most in an AI world, we're building for you.

We're building AI with our eyes wide open. We're betting everything that human expertise doesn't just survive in the AI age: it thrives