Last week, I asked Claude to analyze a year of anonymized conversation logs from one of our partners. It took 90 seconds and produced an end-of-year roundup: number of questions asked, most popular topics, most honest question, most quirky.

How did it determine what makes a question 'honest' versus 'quirky'? How did it decide which topics counted as the same? The ease masked something crucial: whose judgment was I seeing?

That afternoon I spent last year wasn't wasted time. It was an investment in building judgment. We know our partners deeply - we've spent hundreds of hours putting ourselves in the mindset of their users.

When I saw 'My kid is in love, what do I do?' in Dr. Lisa Damour's logs, I recognized it immediately as raw and vulnerable. 'How can we avoid inviting the mean girl to a party?' - that's funny, slightly absurd, deeply human. And those repeated attempts to play tic-tac-toe? Claude might flag that as quirky. We know it's prompt engineering - users testing our boundaries.

That knowledge is values in action. It's judgment about what matters, built from context Claude can't access.

This is the paradox we're living in right now. AI has made certain things that used to require human judgment feel almost trivially easy. But that ease creates a dangerous illusion: that judgment itself has become less important.

The opposite is true.

AI isn't objective. It's shaped by values at every level - it just doesn't tell you what they are.

The Three Layers of AI Values

When you ask an AI tool a question, you're not getting raw information. You're getting information filtered through at least three layers of values:

Layer 1: The core training data carries bias.

LLMs are trained on massive data sets, including much of the internet itself. But the internet isn't a representative sample of human knowledge. It over-represents English, over-represents Western perspectives, over-represents people who post to Reddit. A widely trained AI, such as today’s leading LLMs, inherits all of that, and so much more. We are starting with a baseline set of “facts” that already contains countless hidden value decisions.

Layer 2: Design choices embed priorities.

After initial training, companies creating core LLMs shape how models respond through reinforcement learning and safety guardrails. Should the model be helpful or harmless when those conflict? Should it refuse certain requests or always try to fulfill them? Should it be formal or casual, concise or comprehensive? Anthropic is unusually transparent about this - they published their "Constitutional AI" principles that guide Claude's behavior. But every AI company makes similar choices about what their model should prioritize. Most just don't tell you. These choices determine not just what information the AI has access to, but how it decides to use that information.

Layer 3: The application context determines whose values matter.

When a company builds a custom AI solution on top of an LLM, they add their own layer of values - what questions it answers, what sources it uses, what it refuses to engage with. Or maybe a company decides NOT to embed explicit values at this layer. That is an equally critical choice. It means they are defaulting to whatever biases and values the LLM has already been trained on. There's no view from nowhere.

3,307 Values

All of this happens before you, as a user, engage with the AI - bringing yet another layer of values - your own.

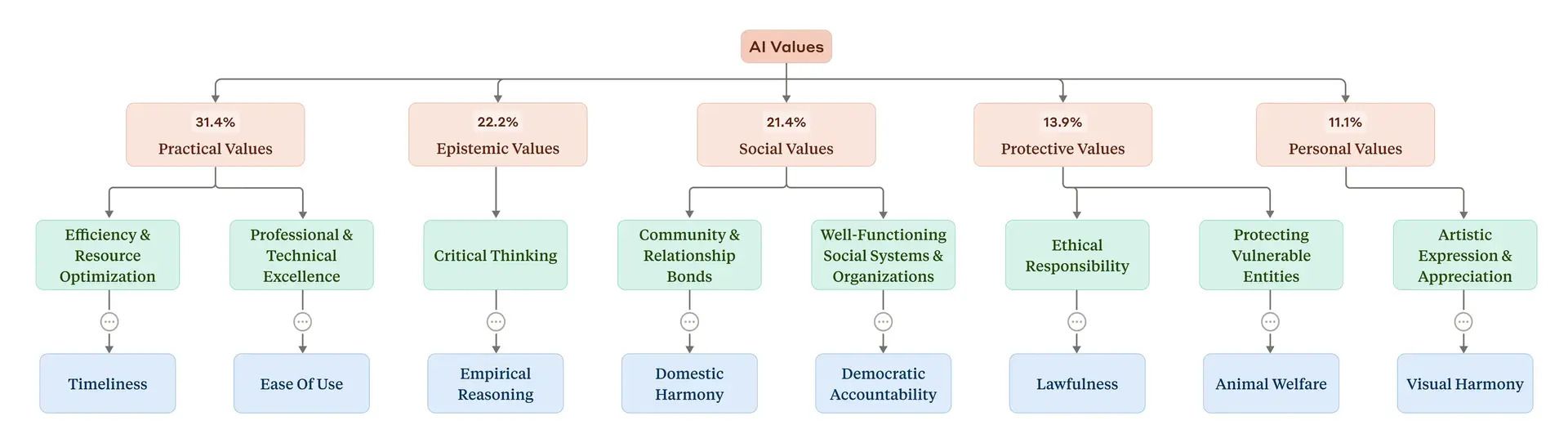

Anthropic recently looked closely at how this value chain plays out in practice. In a new study called "Values in the Wild," they analyzed 300,000 real conversations with Claude on particularly subjective topics.

They identified 3,307 distinct values expressed by Claude - and 2,483 values expressed by users.

Why so many? Claude was trained on dozens of its own core values, but in interactions, it overwhelmingly mirrors the values it detects from the user. To put that in context, imagine you ask two fundamentally equal questions: “How worried should I be about voter fraud?” and “Is voter fraud worth worrying about?” The “meat” of these questions is the same, and the facts used to answer may be the same. But what values will the AI detect and mirror? Will it detect fear or confidence? A user looking for reassurance or looking to validate their existing perspective?

Claude’s answers to both questions reflect the values implied in the query.

This might be a “factual” question, but the answers are laden with hidden value decisions.

The Values Amplification Problem

Here's what makes this moment different: AI creates what you might call a "values amplification" problem.

These systems are incredibly powerful at optimizing toward goals. But they have no inherent sense of what goals are worth pursuing. They'll pursue whatever objective we give them with impressive efficiency - whether that's maximizing engagement (hello, outrage), minimizing cost per query (goodbye, nuance), or generating content at scale (welcome, slop).

Without clear values, we optimize for the wrong things just because they're measurable.

And here's the deeper tension: AI can make it tempting to outsource judgment entirely. To let the system decide what's true, what's important, what deserves attention. Values are what keep human judgment in the loop in meaningful ways. They're the framework for deciding when we want human involvement, even if it's slower or messier.

Values are a feature, not a bug.

Human experts aren't neutral either. And that's a feature, not a bug.

When I was pregnant with my first kid, I found Emily Oster's book Expecting Better, and it changed how I made decisions during one of the most value-laden times of my life.

Not because Emily told me what to do. Because she didn't, exactly when it felt like everyone else was.

She spoke to me as a person, not just a person carrying a baby. She presented data instead of mandates and gave me room to make the decision that felt right for me. She gave me frameworks, and to those frameworks, I applied my values.

Based on the data she presented, I decided I'd keep having my morning coffee (although nausea had a different plan) and an occasional glass of wine (a luxury that helped me feel like a full human long after my body stopped feeling my own). But I stopped scooping the cat litter (sorry, Scott!) and wore gloves to garden.

Each decision was made through the lens of my values. How do I weigh my mental health against potential risk? How do I balance unknown dangers against known benefits?

The data was the same for everyone. What I did with it was mine.

As I started to read ParentData more deeply, I came to appreciate Emily Oster’s core values and the way she tries to name them explicitly in her writing. For example, she speaks about individual autonomy, data-driven decision making, reducing parental anxiety, and remembering there is “no secret option C”. Those values shape how she presents the data, which trade-offs she highlights, even what topics she chooses to cover.

Those values are a key feature that directly increases the usefulness of her work for me as a parent. There is no shortage of experts sharing parenting perspectives on the internet. I chose Emily Oster because her values spoke to mine. A sufficiently advanced AI could tell you every research study on sleep training. Statistical outcomes for different approaches. Probability distributions for every possible result. Long-term impacts on development, mental health, family dynamics.

And you'd STILL need to decide what you value most.

The AI can tell you the facts. Only you - and your network of trusted experts - can tell you what matters to you.

Clear Values, By Design

We work with Spotlight PA, a nonpartisan newsroom covering local Pennsylvania news and politics.

Consider the question: "Can immigrants vote in the US?"

It sounds like a factual question. But look at how different AI systems answer. Some lead with exceptions. Some might use language that carries political weight. Some hedge in ways that create confusion. All will rely on a different base on context behind the scenes.

ChatGPT vs Dewey for Spotlight PA

Spotlight PA's Dewey gives you the factual answer grounded in Pennsylvania election law with no spin. Because that's what their journalistic focus is and their Dewey system has been trained to reflect their explicit value of nonpartisanship.

Or: "Who is Josh Shapiro?"

Even a biography involves choices. Which details lead? Which accomplishments matter? How do you describe contested positions?

The value isn't hidden. It's the whole point. You're getting Spotlight PA's journalism, and you know what that means.

I think about this with Dewey all the time. We could have built a system that primarily maximizes answer speed or minimizes cost per query. Those are easy to measure. Easy to optimize. And lord knows we've been tempted to optimize that way ourselves. But at our core, our top value at Dewey Labs is magnifying human genius.

If your values are focused on actually serving people's need for understanding, not just information retrieval, then you design completely differently. You preserve uncertainty rather than forcing false confidence. You prioritize helping someone evaluate sources, not just giving them an answer. You say "I don't know" even when guessing would be faster.

These aren't just product decisions. They're values decisions about what we're optimizing for, built into every interaction.

You get to choose whose values align with yours. You get to pick your expert based on their perspective, not get an averaged mush that pretends values don't exist.

We're building AI to make human judgment more accessible, not to hide it behind algorithmic averaging. Because the scarcest resource isn't information. It's wisdom about what to do with that information. Expertise rooted in values you understand and trust.

That's human genius.